My MODX caching policy

Posted on Jun 04, 2014 by Mike Nuttall

Google has told us that the speed of your website can effect your Google search engine ranking, but they didn't tell us what aspect exactly. This article at moz.com tells us a lot more specifiacally after they commisioned some research. What they found was that the Time To First Byte (TTFB) of your website seemed to be the most significant influence.

So I decided to have a look at the TTFB of my website, which I had, to be honest, neglected for some time.

I used this command to find the TTFB value:

curl -s -o /dev/null -w "%{time_starttransfer}\n" http:/ /onsitenow.co.uk/

So what were the results?

My pages were getting values of about 0.65. I compared this to other sites that ranked well for my target keywords. The top sites were getting values from 0.15 to 0.68 so quite a range and my value was in that range so I wasn't too concerned.

But I thought I would have a look to see if I could speed things up.

Yikes! The first thing I discoverd was some uncached snippets. That was sloppy. So I got them sorted before anything else.

To quote opengeek one of the main architects behind MODX: "Always Be Caching!".

Anyway that speeded things up a bit, I got my TTFB down to the 0.45 mark nice.

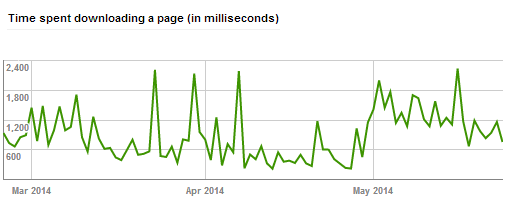

But, having been doing a lot of blogging recently, I noticed in Webmaster Tools that Googlebot had been taking a lot longer to load my pages than previous months.

So I started finding the TTFB for every page before and after the cache was cleared using this script.

I was making lots of changes and amendments so every time I saved, the cache was being cleard, and this slowed all my pages down again to the 0.65 region (and it must have been even slower when I was using the uncached snippets). That doesn't seem too bad but my ranking had dropped recently, so I was concerned that it was an issue.

My first reaction was to use the above curl script after every save, as a consequence of using it was that all the pages on the site would be re-cached.

But was there anything else I could do? What extras might there be available to me? I read this article by JP Devries. Well this all sounds very interesting.

I first tried to get opengeek's RegenCache snippet working but after a while trying to hack that I had to give up. It's supposed to work outside MODX linked to a cron job, but my site is on MODX Cloud and, as far as I know, cron isn't available.

Next I installed xFPC (MODX Full Page Cache) with amazing results. It got my TTFB time down to an average of 0.13 that's faster than all the other websites competing for my keywords. Cool!

That was actually faster than some static HTML documents I tested straight from the sever (ie not via MODX or PHP). How it does that I don't know, maybe using some sort of compression/decompression with the client browser?

There was one downside though, when the cache is cleared, xFPC takes a bit longer to initially cache the pages, up to 0.9 and even 1 second at times

So then it was time to look at another Extra by opengeek: getCache. getCache allows you to save caches from elements so that, they themselves don't get cleared when the main cache gets cleared, so this means the re-caching of the pages should be quicker because that particular element doesn't have to re-run.

That worked too, it got my TTFB time for uncached pages down to 0.4 - 0.6 which is still acceptable.

But I still use my script to clear the cache after I make changes which is a bit of a pain. There's also a plugin by Bob Ray that does this called RefreshCache but my little command line script sounds a lot faster and it runs from the sitemap.xml rather than all published cacheable pages which is probably unnecessary, as some of these will be thankyou pages which Googlebot doesn't see, and which will not have much content anyway so should be quite speedy for the user even if uncached..

And there are other Extras that might help when saving resources: cacheguard by dinocorn which simply unticks the "Empty Cache" checkbox on unpublished resources.

And another by Bob ray called CacheMaster, which allows you to clear the site cache of a single page rather than the whole resource, it also unticks the "Empty Cache" check box when you edit a resource.

But I couldn't get either of these last two to play with Articles resources, which is my main need. (Admittedly I didn't try for very long, (clients calling), might be worth further exploration.)

One's I want to check out in the future are StatCache which writes pages to file and then uses the web server's rewrite engine to serve the static files first. Though you can't do dynamic content with this, which would might be ok for some blogging sites possibly?....